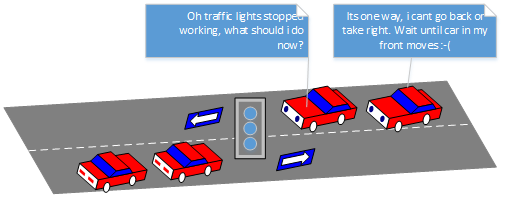

Does autonomous car is designed to tackle every situations? What if they get to a point where they are not trained to react to a particular situation? Major differentiation between the autonomous cars and human drivers is towards the adherence of traffic rules. When situation arises to drive away the car from intersections where the construction is in progress, traffic jam due to accident zones we humans won’t mind in breaking the rules. But the autonomous cars are not trained to break rules when required, because the situations when the rules to be broken is something one cannot think of.

This would mean the autonomous car gets strangled until there is an external help or support and the occupants inside the cars also gets strangled.What kind of support structure can be deployed in these scenarios? I can think of below two solutions:

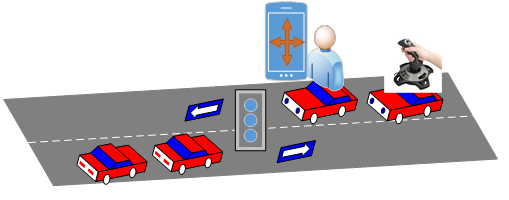

- Provide a way for occupants steer away cars from these situations – joy stick inside the car for manual override or mobile app which acts like gaming console. Liability when something happens during manual interventions to be owned by the user who would be operating car.

- Remote driver who can help steer away the car from these scenarios by accessing the car remotely with the help of live video streams from the car. Liability during any untoward incidents lies with the company which operates these car remotely, and also they should multiple security levels before they even get access to the car (only upon the user acceptance, they can take control of the car).

You must be logged in to post a comment.